What is a Headless CMS?

Headless CMS’s have become the hot topic in recent years along with preferences to move to microservices over monolithic architectures and to decouple parts of applications where possible. So, you may be wondering do I need one?

Let’s start with what a Headless CMS actually is. A typical CMS does two jobs;

Firstly, it provides a place for marketers to login and create pages of content that appear on the website. Some allow very basic content editing, other provide the capabilities to construct entire pages from pre-built components, preview what it looks like, apply personalisation rules, split testing and a whole host of other features.

Secondly, they allow developers to create templates of skins to render the pages to visitors. They also provide authentication, form data collection, track visits, browsing habits etc.

Essentially the functionality splits into what the marketer sees and what the visitor sees.

A Headless CMS on the other hand, chops of the second part and in its place leaves an API so that another application can pull content out of the CMS for it to display. The CMS then becomes a place solely responsible for managing content rather than managing a website.

Decoupling in this way offers a few advantages:

- The technology/platform is no longer determined by the CMS. You could have a .net based CMS and write the website in JavaScript, php, or any other language.

- The content can be provided to more than just a website. In the same way that digital asset management platforms specialise in serving digital assets to other systems. E.g. mobile app, web, print etc. A headless CMS can provide content to multiple other systems too.

- It’s much easier for a headless CMS to become a SASS offering. Most custom development when you install a CMS is to tailor the visitor website experience, rather than the admin experience. Once the visitor website is removed there leaves little reason for it to be a product you install and maintain making it much easier for vendors to supply as SASS.

So, should I have one?

With all these advantages the answer should be yes, right? Well its not quite that simple, there are also some disadvantages, so let’s answer some more questions to see if it’s really suited for your situation;

Do you want to use a different website language?

One of the advantages of going headless was that you could pick the language you write the head in, but which language do you want to write it in? Most articles will talk about writing the head in JavaScript frameworks such as React, Vuejs or Angular. The reason for this is simple, if you want to write your application in one of these then you’re a bit stuck for CMS choices as no viable CMSs exist written in React, Vuejs or Angular so using a headless CMS is the only option other than writing an entire bespoke admin.

But what if you want to write the head in .Net or PHP? A headless CMS won’t restrict you doing this in any way, in-fact Umbraco Heartcore even provides a .Net client library to help. The only thing you must question is if it’s going to give you more advantages than disadvantages. By cutting off the head you now have to create it, so are you creating something different to what Umbraco’s Head originally did or are you just recreating the same thing?

Is there a specific pre-built head you want to use?

An alternative to writing your own head is to buy one that is premade. The biggest downside I see to headless is that you have to build and support the head, so using one that is pre-built and compatible with your CMS is a big advantage.

However, this does also mean that you may end up paying a higher price in license fees. Unless your CMS costs decrease enough to cover the additional costs of the head, your ultimately now licensing two products which could add up to more than one.

How many heads are you going to have?

If the answer is one and it’s a website, then it’s highly likely that you don’t need a headless CMS as you’ll effectively end up writing an inferior head to the one, you’re replacing.

CMSs like Wordpress have almost 2 decades of work gone into advancing them at this point getting them to a place where they are feature rich, reliable and most importantly secure. Creating your own head will require you to do they work they have already done.

Are you building a website?

If the answer to this is no, then a headless website makes a great option to provide an admin experience for your content to become editable. They are pre-built, integrate with the popular enterprise authentication systems and offer great features around workflow and user permissions.

However, if the answer is yes, then you need to think carefully. Going truly headless has some quite major disadvantages for a website:

- You need to write and maintain the head, and if that head is doing the same thing the CMS one did than you’re just reinventing the wheel.

- As the CMS is now only providing content to another application less will be possible through the admin interface. E.g. No ability to preview pages, no drag and drop page creation.

- Creating things like campaign landing pages or any new page layout creation may no longer be possible without involving the IT team. Because the CMS is now only providing the content rather than the layout, unless the head provides an admin to manage this you could end up with very fixed page templates.

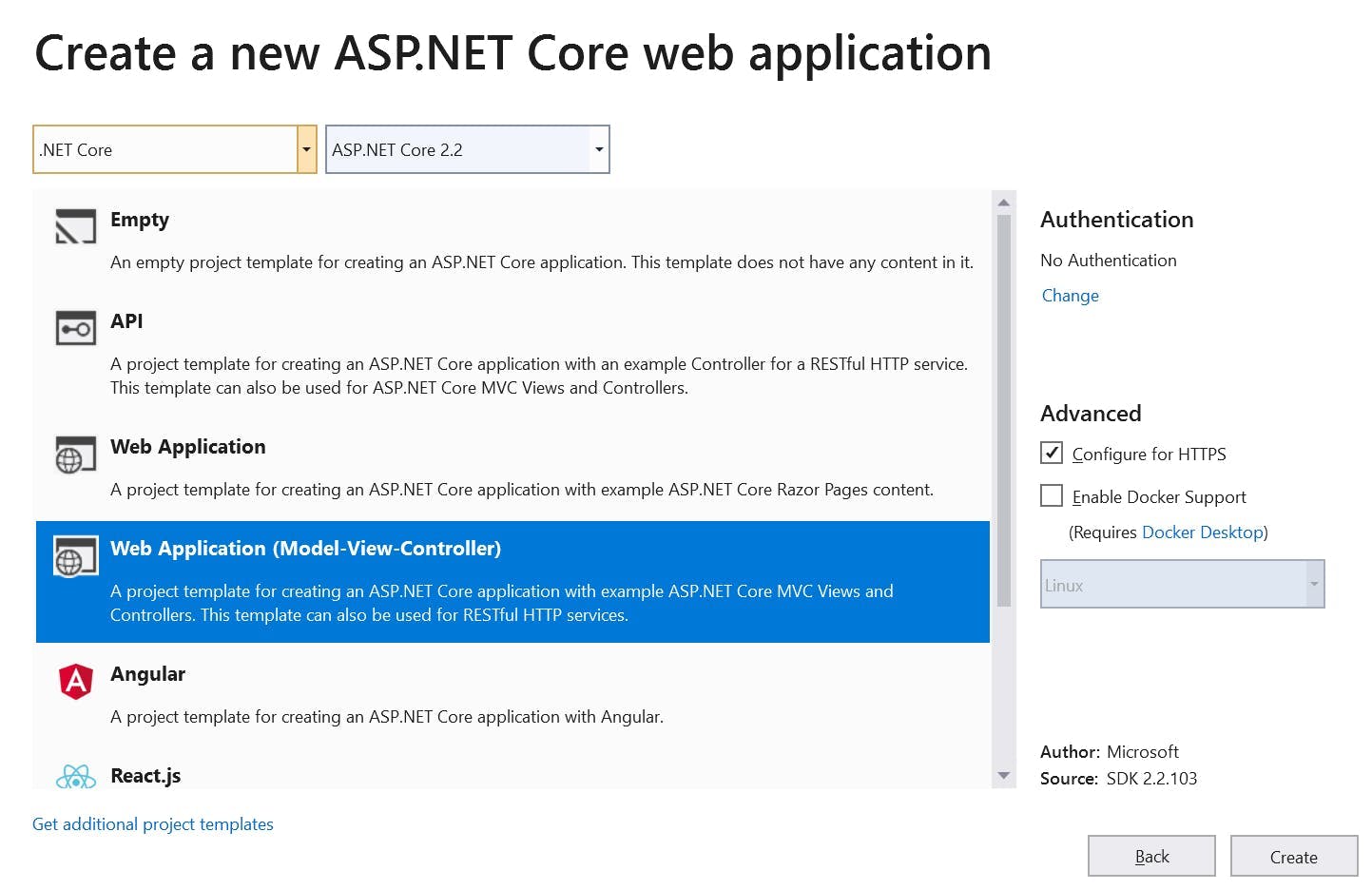

A CMS that can work both head on and headless may be a better option. For instance, Sitecore can be set up like a traditional CMS where the custom rendering logic is added to the main application. However, it can also be set up as a decoupled CMS where the page rendering is achieved using Angular, Vue or React, but note this is decoupled and not headless. The visitor site can be hosted separate from the CMS like with headless, but a lot of the logic is still provided by Sitecore so things like personalisation, admin previews, page construction, Sitecore forms etc are all still possible. It also can work in a truly headless way by using the same API that powers the decoupled head to power a bespoke non-Sitecore head, like a mobile app.

Is your content reusable?

A news corporation writing articles that appear in newspapers, websites or mobile apps are obviously creating a lot of reusable content that can stay the same on each destination.

Alternatively, a typical brochure site (done well) are created with a journey in mind where the whole page has been designed to achieve an outcome. Some content may just be a 3 words combined with an image to evoke an emotional response. Other content may be specifically written to fit a certain gap so that it appears the same size as 2 other pieces of content next to it. None of which may actually translate into mobile.

If this is the case what you may end up actually doing is rather than creating a content repository that can be reused. Is creating a repository where 90% can’t be reused and the other 10% is lost amongst it.